Are the photos above taken of an LCD or CRT monitor screen? Hard to tell? That's the challenge. The challenge of making the LCD display resemble that experience we had growing up with CRT technology. Is it authentic enough? Everyone is going to have a different opinion. I find it impressive, even though it's work in progress and there is always room for improvement.

There has been a substantial amount of work done in recent times in relation to Apple II video hardware. There were two video hardware based presentations at this year's KFest event ("VidHD-style HDMI solution with Ethernet-out bus card to Pi" by John Flanagan and "Yet Another Video Converter with Tang Nano 9K FPGA" by Rob Kim). The "AppleII-VGA" and "∀2 Analog" cards have been improved upon and used at the KFest solder session. The one that sparked my interest the most was ushicow's "Tang Nano 9K Apple II Card". You may have noticed his post in the KFest Discord environment. In the meantime, I've been making progress with my own solutions, the A2VGA IIe and IIc cards. This time it's been on the display quality side of things.

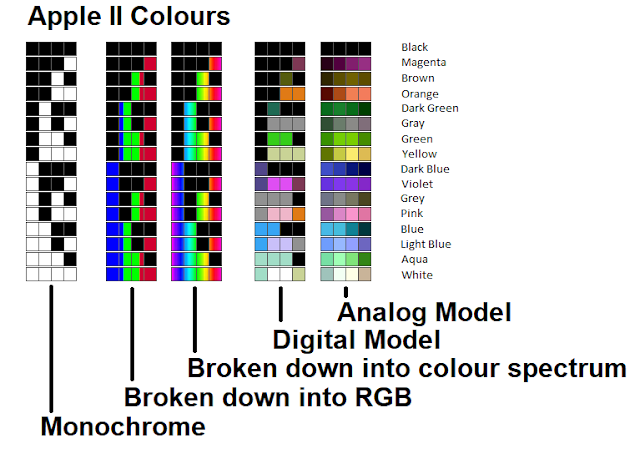

I've been playing around with Apple II colour models for several years and I've tried to understand how the colours relate to each other. When I say colour model, I mean converting the 560x192 monochrome video display (which at its core is what the Apple II really is) into the most visually pleasing coloured display. I started off by using digital models. By this I mean each resultant pixel is one of the sixteen possible Apple II colours. A sixteen colour palette is used to lookup the colour to be displayed. The AppleWin - Color (Composite Idealized) is one of these models and was the first model I implemented into my projects, back in 2015. Recently I've been trying to get my head around analog models. This is what I call a model where instead of picking a colour from a palette, mathematics is used to calculate the required colour. The full range of system colours can be used (A2VGA uses a 16bit / RGB565 colour system).

In this blog entry I'm not going to go into detail as to how composite video works. What is worth noting, however, is that colour on the Apple II revolves around a four pixel block. The reason for this has to do with the pixel clock frequency. When the Apple II requires colour to be output, it sends out a colorburst signal of approximately 3.58MHz. Colour is generated as a phase difference from this signal. If the Apple II's pixel clock was the same frequency as the colorburst then the "ON" part of that signal would output the entire colour spectrum in one bit. This is just a monochrome signal. If the Apple II's pixel clock was 3 times the colorburst frequency, then the colour spectrum would be divided into three pixels. This is basically red, green and blue. However, the Apple II's pixel clock is four times the colorburst, meaning that four pixels cover the colour spectrum. Each pixel is treated as being ninety degrees away from its neighbour. The "ON" or bright parts of the monochrome signal determine which parts of the colour spectrum are added together to form the resultant colour.

Colour spread can be adjusted by an aperture knob on a CRT monitor / TV. Turning the knob one way spreads the colour out giving you a nice uniform colour but the picture looks smooth and fuzzy. What this is doing is covering up the "OFF" or dark pixels (gaps of up to three pixels) that come from the monochrome picture. Turning the knob, the other way shortens the colour spread and results in a sharper looking picture. However, if you go too far you will start seeing these dark pixels gaps appear and these cause the picture to look stripy. This same affect can be achieved by using different models or changing the parameters that generate those models. You'll see this contrast in the models below.

The next part of this blog will show a comparison of these various models as best as I can. Please note that these are going to be photos taken of the display screen so it's not going to be the same as viewing these results on a monitor with one's own eyes. I've used a lot of zoomed in images to give a feel for the differences between the models. Some of these zoomed in images might look a bit trippy but that's not necessarily how they look when viewed on a whole screen.

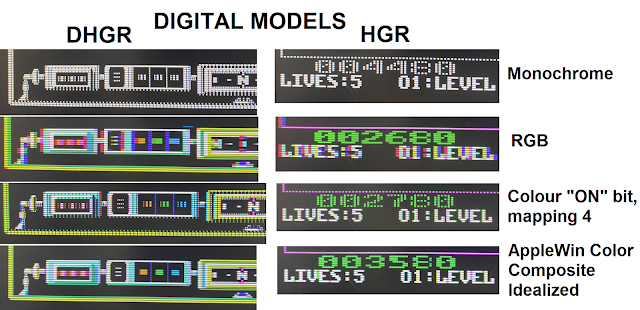

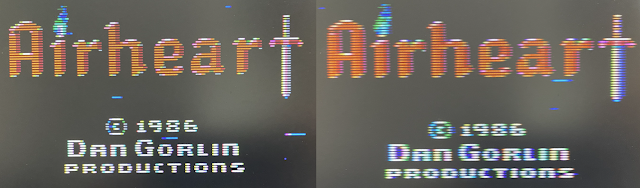

I used the game Vindicator for the capturing of High Resolution Graphics (HGR) and Airheart for Double High Resolution Graphics (DHGR). If you have read any of my previous blogs, then you may have noticed that I use Airheart a lot for testing. For one, it runs in DHGR mode and DHGR is less forgiving than other Apple II modes. Problems tend to stand out more. I do use other Apple II video modes but only after I'm happy with the DHGR results first. Secondly, Airheart allows me to test all the points below in one go.

1. Display of the single width pixel and how colour spreads into the dark pixels. Look at the blue lines at the bottom of the screen. I like them being as thin as possible.

2. Uniformity of solid objects. Look at the red, green and blue squares at the bottom of the screen and the screen borders. This varies from a smooth even look to a sharp stripy look.

3. Moving white objects. The compass text as it spins about should only change the faint glow around the font and have minimal effect on the font colour.

4. Double pixel width fill ins. Look at the score board for digit contrast, fill in colour and colon between digits.

5. Clarity of DHGR text.

6. End tips of the blue water wave lines can show off the oval gaussian pixel spread typical of analog type signals (hence my preference of VGA over DVI/HDMI when available).

Digital models:

Every colour model takes its source from the monochrome data. The simplest model takes four pixels, determines the colour from the palette of sixteen and then outputs that colour as four pixels. That model is called the RGB model (displayed by some RGB expansion cards). It's very blocky and does not look anything like an Apple II output. This model helps us see that setting the correct pixel location is also important.

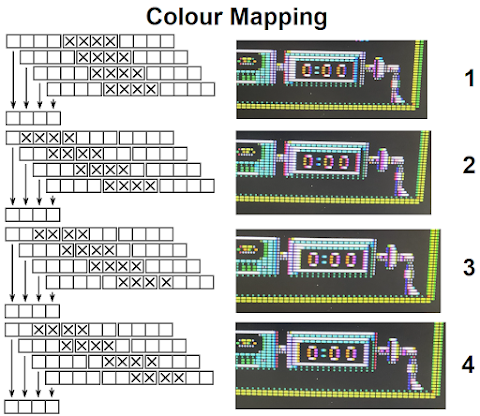

Generating a digital model can be broken down into two stages. The first stage is colour mapping the pixel locations where the monochrome pixels were "ON" or bright. The above diagram shows four different ways in which the source data can be read. Each way results in a slightly different output. The second stage involves filling in the gaps between the coloured pixels. Only gaps of one, two and three pixels need to be filled in. Again, there are multiple ways in which one can choose to fill in the colours.

The result is a model such as AppleWin - Color (Composite Idealized). This model has a very thin fringe of colour around white objects. I played around trying to increase the fringing colour but that just made things worse. It makes it look like the colour is part of the font instead of making it look like the colour is a faint glow around the font. This model is very sharp, but it comes at a price. Sometimes it creates anomalies like on the number 8 in the HGR example above or less than ideal sharp colours between the letters in the HGR example above.

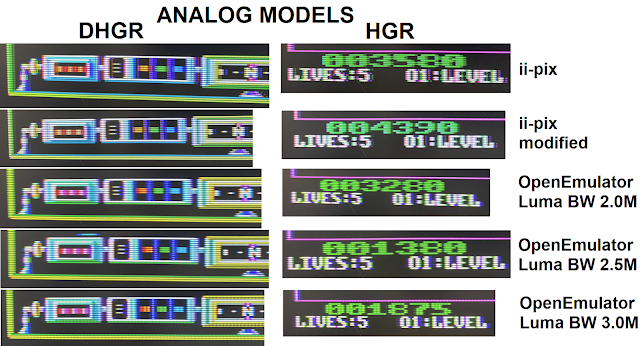

Analog models:

Kris helped me with the understanding of how his ii-pix model worked. I converted the code to be used for the A2VGA cards. Playing around with the model parameters I got great results.

I then tried converting over the OpenEmulator code and I had success there as well. Due to the Pi Pico being limited in resources and only being able to run up to 133MHz ish, unlike other video solutions that are running in the GHz range and contain multiple more cores, there is obviously limitations with this model ie no barrel or shadow mask effect, limited integer scaling and 16bit colours. Even still, the results are outstanding.

Here is a good comparison of DHGR text. On the left we have the sharp AppleWin Color (Composite Idealized) and on the right we have the smooth but authentic OpenEmulator with a luma bandwidth set at 2.0M. If you find that the OpenEmulator picture is too smooth, then you may be accustomed into thinking that CRT displays were sharper and looked more like today's emulators. You can adjust the OpenEmulator output to be sharper by upping the luma bandwidth but at a value of 2.5M you will start to see the picture become stripy and by the value of 3.0M it is well defined. I've played around with the Chebyshev and Lanczos windows which OpenEmulator uses in its mathematical calculations to see how the output changes and I've also added a tweak to clean up solid lines.

A link to the video example of the OpenEmulator Model.https://drive.google.com/file/d/1MkWuSuV-nNTKfjn8OiW6IyQbo_N3P9YP

During a discussion a question was asked on how an analog model would look like on hardware that had a lower colour range. Specifically 9bit / RGB333. I did a simulation and it came up pretty well. Much better than I had expected. However, it did struggle with colours that were similar ie in Airheart the noticeable colours were red, orange and brown.

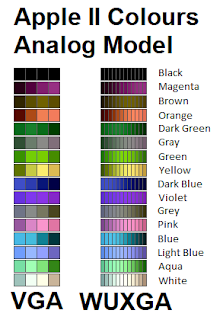

Using high definition (WUXGA):

The above chart shows how much difference there is on a 1:1 pixel translation colour spread vs a 1:3 pixel translation. However, since the OpenEmulator's output model is very smooth, using high definition does not improve the output on a standard size monitor. Where high definition may become advantageous is when trying to smooth out a digital model or where each pixel becomes large and blocky ie when viewing the image on a large screen. It's something I have not needed as much as I thought I would but it's there now in case I need it.

I'm not a fan of the 1920x1080 / 1080p / Full HD resolution. It doesn't allow for a nice integer scaling of the Apple II display. Therefore, I haven’t spent much time in getting this resolution to work.

Conclusion:

All my equipment is PAL based and the best composite output I have is from a IIc Modulator/Adapter. Hence, I'm not in the best position to be comparing results to actual hardware and fine tuning the results. If you are interested in Apple II colour models, below are a few good links to resource material.

OpenEmulator

https://observablehq.com/@zellyn/apple-ii-ntsc-emulation-openemulator-explainer

https://zellyn.github.io/apple2shader/

https://github.com/gabrieldiego/tg/blob/master/ref/Keith%20Jack%20-%20Video%20Demystified%20-%20A%20Handbook%20for%20the%20Digital%20Engineer%204e.pdf

ii-pix

https://www.youtube.com/watch?v=JpyGDqhcFlA

https://github.com/KrisKennaway/ii-pix

Stephen A. Edwards method

https://www.cs.columbia.edu/~sedwards/papers/edwards2009retrocomputing.pdf

AppleWin - Color (Composite Idealized)

https://lukazi.blogspot.com/2017/03/double-high-resolution-graphics-dhgr.html

https://lukazi.blogspot.com/2015/05/a2-video-streamer-colour.html

Other

https://nerdlypleasures.blogspot.com/2021/10/apple-ii-composite-artifact-color-ntsc.html

https://www.kansasfest.org/wp-content/uploads/2009-ferdinand-video.pdf

https://www.kreativekorp.com/miscpages/a2info/munafo.shtml

https://docs.google.com/spreadsheets/d/1rKR6A_bVniSCtIP_rrv8QLWJdj4h6jEU1jJj0AebWwg/edit#gid=0

Thanks goes to Kris for his help on the ii-pix conversion, Marc for implementing OpenEmulator and Zellyn for making the OpenEmulator code more accessible.

Who knows where this will go? I've been playing around with my own digital and analog models. To date, they have not been as impressive as the models detailed in this blog. To me the A2VGA is more than just an Apple II video card. It's a system that allows me to play around with various Apple II colour models. Now, all I need is more play time. I plan on covering how the A2VGA works and how all this was implemented in a session at the next OzKFest event in late October 2023.